|

12/16/2023 0 Comments Create table redshift

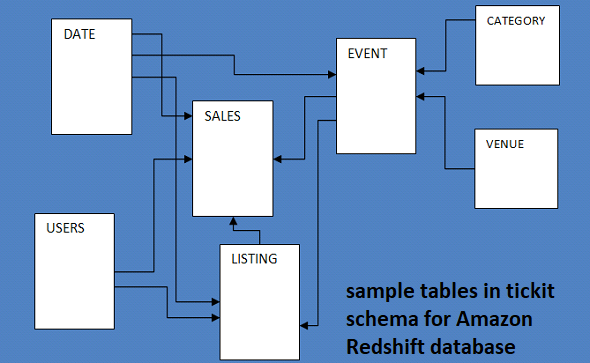

Redshift supports two resize approaches, classic and elastic. Once a cluster is created, the node count may be altered by cluster resize. There are benefits to distributing data across many slices. When any query is submitted to the leader node, it is passed to each slice for execution on the data present in that slice. The number of nodes in a cluster is determined by data volume as well as computing resource requirements. Mixing of a node type and node size in a cluster is not supported. Unless there is a requirement for more resources, start with a low node size as a high node size for each node type is more expensive. Once node type is selected based on application requirements, it is required to select between node sizes. RA3 is recommended if the data is expected to grow rapidly or if the flexibility to scale and pay for computing separate from storage is required. DS2 usage HDD for storing data which makes it less performant than DC2 and RA3.ĭS2 is recommended for workloads with a relatively high amount of known data size, not expected to grow rapidly, and where performance is not the primary objective. Deciding on a node typeĭC2 is recommended for applications with low latency and high throughput requirements and with relatively fewer amounts of data. DC2 offers large and 8xlarge node types.ĭS2 offers large and 8xlarge node types whereas RA3 offers 4xlarge and 16xlarge. Each of these node types offers 2 node sizes These node sizes are based on the size of CPU, memory, and storage capacity. The current generation node type is grouped into DS2, DC2, and RA3. It is recommended to select node types from current generation node types. Node types are divided into previous and current generation node types. Now, as we understand Redshift cluster basics, let’s understand node type, node size, and node count? Supported node typesĪmazon Redshift offers different node types based on different workload requirements such as performance, data size, future data growth, etc. The compute node is transparent to end-users. In the case of more than one compute resource, a leader node is also created for coordination among compute nodes and external communications. When a table is loaded with data, the rows are distributed to node slices. Each slice is allocated a portion of compute node’s memory and disk space. The compute node is further partitioned into slices. The cluster nodes are divided into leader nodes and compute nodes. Each cluster runs an Amazon Redshift engine and contains one or more databases. Redshift cluster is a collection of computing resources called nodes. However, in this article, we have tried to summarize these aspects in one place so that individuals can develop an overall understanding before starting to deep dive into each aspect individually. We have extensive documentation already available to cover these aspects. While working on Redshift, we need to understand various aspects of Redshift such as cluster architecture, table design, data loading, and query performance tips, etc. This article was published as a part of the Data Science Blogathon IntroductionĪmazon Redshift is a data warehouse service in the cloud.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed